ThInfo

Information Theory

Description: In this course, students are expected to acquire and master the basics of Information theory. The following concepts will be covered : source coding problematics, statistical sample and probability distributions entropy, joint and conditional entropy, mutual information and Kullback-Leibler divergence and entropy maximum principle. The use of prefix trees to design uniquely decodable codes will be illustrated through the example of Huffman’s optimal coding algorithm.

Learning outcomes: At the end of this course, students will be able to describe and explain the fundamental concepts of source coding and the associated issues; provide an intuitive understanding of the implications of the course concepts for the source coding problem; manipulate and analyze entropy from a mathematical perspective; define the Kullback–Leibler divergence and relate it to the notions of entropy and mutual information; and apply the principle of maximum entropy.

Evaluation methods: 2h writtent test, can be retaken

Course supervisor: Paul Fraux

Geode ID: SPM-MAT-007

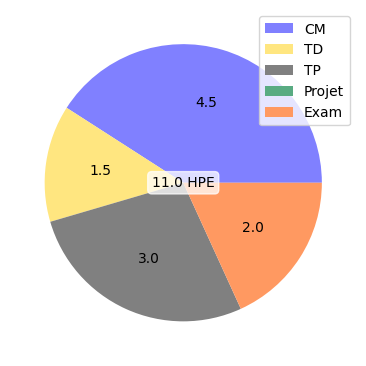

CM:

- Codage source de canaux discrets (1.5 h)

- Théorie de l’information 1/2 (1.5 h)

- Théorie de l’information 2/2 (1.5 h)

TD:

- Entropie et divergence KL (1.5 h)

TP:

- Codage de Huffman (3.0 h)